Author

Contact

michal.klempa@gmail.com

michalklempa.com

Public profiles

github.com/michalklempa

hub.docker.com/u/michalklempa

linkedin.com/in/michal-klempa

twitter.com/KlempaMichal

stackoverflow.com/users/3944551/michal-klempa

Projects

Docker

Maintainer of own open-source NiFi Registry Docker Image with 100K+ downloads.

NiFi

Code contributing to Apache NiFi.

My projects using NiFi:

- ETL pipelines from various sources to Kafka with Avro encoding

- ASN.1+BER/DER/PER parsing and transforming pipeline – including development of custom Processors for NiFi

- SNMP data collection, transformation and storage

- SMS notification system based on NiFi, MySQL, gammu

- Unity3D model conversion automation, using S3 buckets as source and destination of models.

Flink

Developed rule-based customer notification engine. Engine is based on Apache Flink with Apache Avro as state serialization backend. Runs in Docker Swarm cluster. Multiple stream joins are performed before rule evaluation occurs.

Knowledge and experience

Stream Processing

Apache Kafka and Confluent Platform - installation, configuration. Secure Kafka with Kerberos.

Kafka integration with NiFi, Flink and Spark.

Stream processing with Flink and Spark. Protocol buffers and Avro as in-transit format.

Apache NiFi: ETL pipelines with NiFi, Kafka, Flink, Schema Registry and ElasticSearch.

DevOps

Deployment: Docker Swarm, Kubernetes, Ansible, Vagrant, Terraform

CI/CD: Jenkins Pipelines, Gitlab CI, Artifactory.

Cloud: AWS (ECS, EMR, S3, VPC, 53, EC2), Google Cloud (GCE, GKE), Azure (HDInsight, AKS), Digital Ocean

BigData

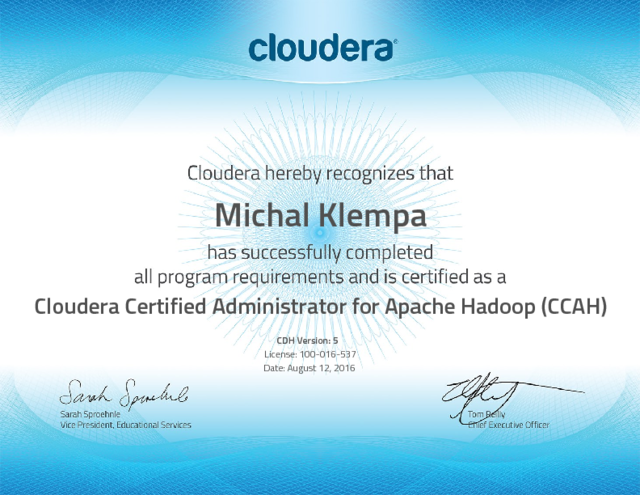

Data pipelines: Hadoop, Spark, NiFi, Hive, Pig, sqoop. Data stewardship using Zeppelin with Scala and Spark. Hadoop administration: Apache Ambari/Cloudera Manager, Hadoop installation, tuning HDFS, YARN and securing Hadoop with Kerberos and LDAP/AD integration. Certified for Cloudera and Hortonworks Hadoop distributions.

Background

Experience with programming custom OS kernel (C, assembler) running on simulated CPU (MIPS R3000).

Java server-side technologies: Hibernate, myBatis, Spring. RDBMSs: MySQL, PostgreSQL.

Java frontendframeworks: Vaadin, Spring MVC, Swing.